Amazon Web Services has announced the public release of its Trainium3 AI chip, a custom processor designed to reduce the cost and speed up the training of artificial-intelligence models — a move that positions Amazon as a growing competitor to Nvidia in the data-center chip market.

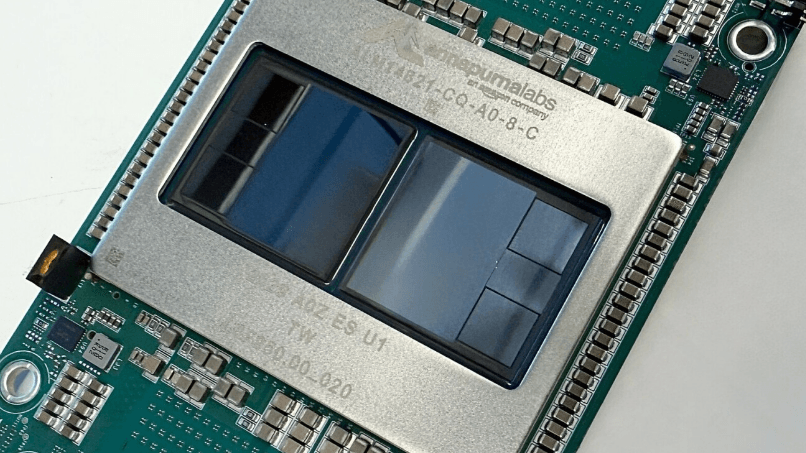

AWS said the Trainium3 chip is up to four times faster than the previous generation and can cut AI training and inference costs by as much as 50% compared with similar GPU-based systems. The chip is designed by Annapurna Labs, Amazon’s in-house silicon division, as AI companies look to diversify their supply of high-performance chips amid global demand and limited GPU availability.

The company also launched Amazon EC2 Trn3 UltraServers, powered by Trainium3 and built on 3-nanometer technology. AWS said these systems allow organizations to train larger AI models, reduce latency, and support more users with lower operational costs, giving both startups and major enterprises access to infrastructure that previously required massive investments.

What Are Trainium3 UltraServers?

Trainium3 UltraServers are AWS’s newest high-performance compute systems built specifically for advanced AI workloads. Each UltraServer can host up to 144 Trainium3 chips, delivering as much as 4.4 times more compute power, four times greater energy efficiency, and almost four times more memory bandwidth than the previous generation.

These servers help companies train complex models in less time, run real-time generative AI, and scale inference workloads to millions of users.

How Trainium3 Works

Trainium3 achieves its performance gains through a combination of new chip architecture, faster interconnects, and improved memory systems that reduce data bottlenecks. The chip also uses 40% less energy, helping companies reduce both costs and environmental impact.

AWS says testing with GPT-OSS, an open-weight AI model, showed three times higher throughput per chip and four times faster response times than the Trainium2 generation.

Advanced Networking and Scaling

A major upgrade in Trainium3 systems is AWS’s new NeuronSwitch-v1, which doubles bandwidth inside each UltraServer. An improved Neuron Fabric network reduces communication delays between chips to under 10 microseconds — a critical improvement for training massive models or running real-time applications such as conversational AI, agentic systems, and reinforcement-learning workloads.

With the EC2 UltraClusters 3.0 architecture, customers can scale to clusters of up to 1 million Trainium chips, enabling the training of trillion-token multimodal foundation models and serving millions of concurrent inference requests.

Customers Already Using Trainium

Companies including Anthropic, Karakuri, Metagenomi, NetoAI, Ricoh and Splash Music reported up to 50% savings on model-training costs using Trainium. AWS said its own Amazon Bedrock service is already running production workloads on Trainium3.

Decart, an AI lab focused on generative video, is using Trainium3 to produce real-time video frames four times faster and at half the cost of GPUs — making advanced generative content feasible at scale.

What’s Next: Trainium4

AWS confirmed that Trainium4 is already in development. The next-generation chip is expected to deliver:

- at least 6× processing performance (FP4)

- 3× FP8 performance

- 4× more memory bandwidth

Trainium4 will also support Nvidia’s NVLink Fusion technology, enabling mixed-infrastructure racks using both AWS Trainium chips and Nvidia GPUs.

For more similar news and reports go to the home page of The Gignomist