Physical AI in robotics is no longer a concept of science or a futuristic niche — it’s swiftly transitioning into widespread commercial reality across manufacturing, logistics, healthcare, and consumer markets. The world’s largest semiconductor giant Qualcomm CEO Cristiano Amon says humanoid robotics is the next trillion-dollar frontier for AI.

In 2025, global industrial robot installations hit an all-time high of US $16.7 billion, reinforcing the shift toward intelligent robotics adoption, driven by labor shortages, digital transformation, and supply chain modernization. At the 2026 wave of enterprise deployments and industry forecasts from Deloitte and Morgan Stanley, robotics powered by physical AI — where machines sense, reason, and act autonomously in the real world — is driving breakthroughs beyond repetitive automation to adaptive, learning-capable systems.

Across the United States, EU, China, Canada, Germany, Australia, and other tech hubs, robotics innovations are penetrating core operational functions and economic infrastructure — marking 2026 as a watershed year for robotic automation that genuinely interacts with the physical world.

What Is Physical AI in Robotics?

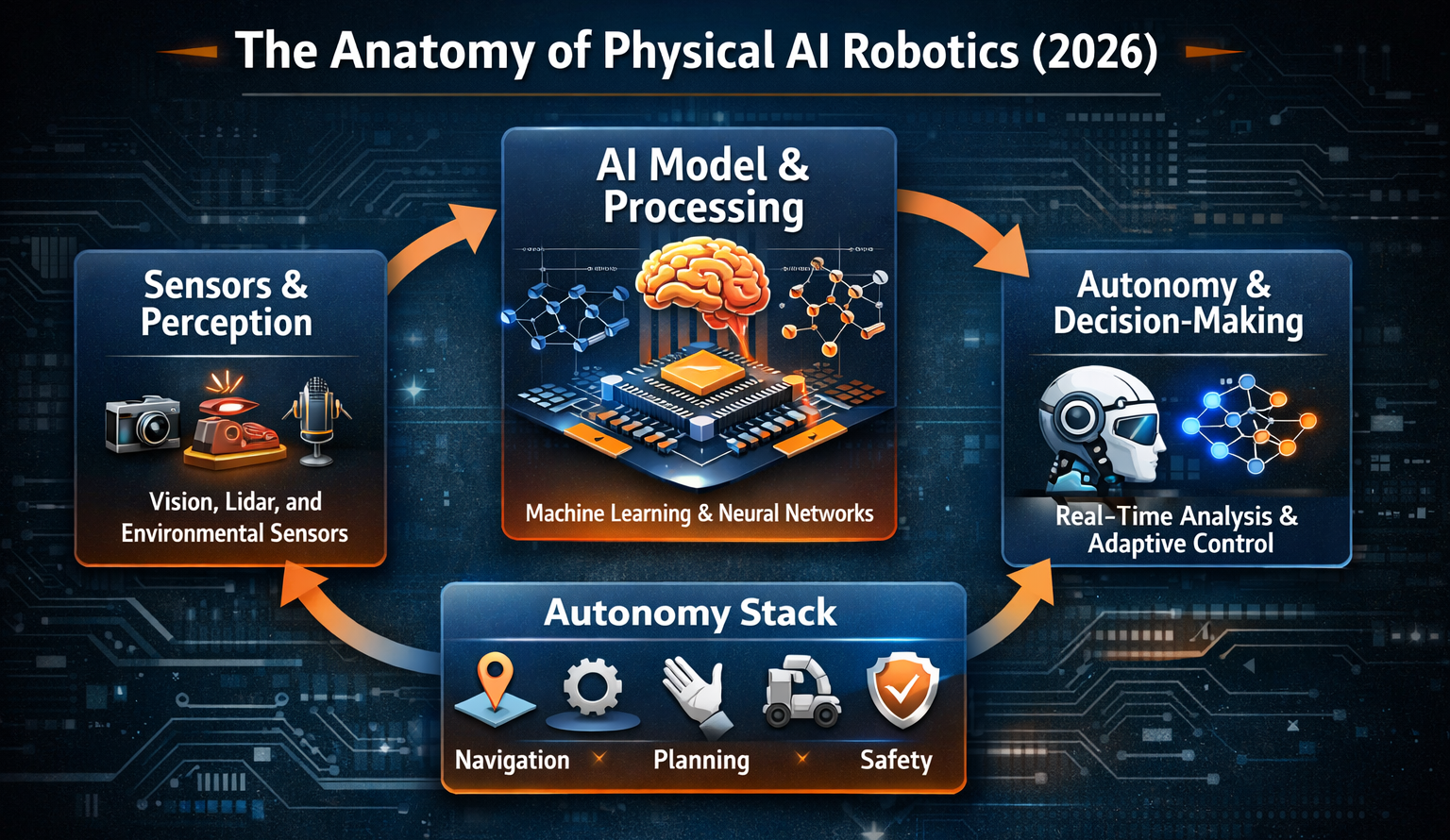

Physical AI in robotics refers to machines that combine artificial intelligence with real-world sensory perception and physical actuation — enabling them to understand, reason, and act in dynamic environments without rigid pre-programming.

Traditional robots excel at rule-based tasks on fixed lines, like welding or packaging. In contrast, physical AI robots perceive their surroundings, interpret context, and make decisions — much like a human workforce member would — but with machine precision and resilience. This integration of vision, language understanding, sensor fusion, and autonomous motion creates a new class of machines capable of navigating unstructured environments like warehouses, construction sites, and public spaces.

For example, instead of simply stacking boxes on a conveyor, a physical AI robot can identify damaged packages, reroute inventory, and adapt its task sequence in real-time, all while communicating with adjacent systems for safety and efficiency.

Core Breakthroughs Driving Physical AI in 2026

Recent breakthroughs have significantly expanded the capabilities and commercial viability of Physical AI robotics. These advances are enabling robots to operate more autonomously, safely, and efficiently in real-world environments.

1. Edge-Optimized Intelligence Meets Physical Interaction

The shift from centralized cloud-based AI to edge inference at scale is enabling robots to make split-second decisions locally — critical in scenarios where latency or connectivity matters (e.g., factory floors or autonomous delivery). This architectural change allows physical AI systems to better integrate sensory data, simulation feedback, and actuation control without constant cloud reliance.

This upstream fusion of vision, language, and action models — often termed Vision-Language-Action (VLA) — equips robots with the capacity to interpret complex scenes and execute nuanced tasks. Such models can handle various affordances, from grasping unpredictable objects to navigating human-centred spaces, bridging the digital-physical divide like never before.

2. Humanoids and Adaptive Platforms Enter Enterprise Trials

Unlike traditional rigid automation, humanoid robots powered by physical AI are being pilot-deployed in workplaces where tasks are diverse, unpredictable, and require contextual judgment.

For instance, Austin-based Apptronik secured US $520 million to scale its Apollo humanoid robot, designed to operate safely alongside humans in warehouses and factories.

In parallel, collaborations like Google DeepMind’s integration of Gemini AI into Boston Dynamics’ robotic platforms aim to equip robots with reasoning and environment-adaptation capabilities — a significant leap from predefined movement sequences

This trend underscores a broader pivot: robotics innovations are no longer siloed research artefacts; they are enterprise-ready assets targeting broad adoption in manufacturing, logistics, and service industries in 2026.

3. Industrial Robots Evolve Into Physical AI Systems

Physical AI robotics and dark factory models are rapidly replacing the traditional manufacturing operations around the world.

Instead of fixed robotic arms with static instructions, manufacturers are deploying systems capable of:

- Perceiving component variability and adapting processes

- Optimizing sequences without human intervention

- Learning from real-world interactions in operational cycles

These capabilities help address talent shortages and unlock productivity gains — reasons why around 82 % of industrial companies recognize AI as a strategic growth driver.

Across Germany’s automotive manufacturing corridor, China’s massive production clusters, and advanced facilities in the United States and Canada, physical AI robotics is setting new benchmarks for operational resilience and continuous improvement.

Key AI Robotics Trends Shaping 2026

AI robotics are already realizing measurement values across a number of industries today. The enterprises are adopting such systems for scalability, safety, and efficiency.

AI-Powered Designing and Agentic Workflows

Robotics systems are no longer static machines: they are becoming cognitive agents capable of anticipating, planning, and executing complex workflows based on real-time environmental understanding.

For example, robots are moving beyond programmed tasks toward “agentic robotics” — workflows where the robot can decide autonomously how best to achieve an objective, whether reorganizing stock routes or prioritizing high-value items during peak operations.

Hybrid Collaborative Ecosystems — Human + Robot Synergy

In 2026, robotics is embracing a collaborative paradigm. Cobots (collaborative robots) and intelligent automation systems are designed to work with human teams rather than replace them — particularly in contexts where nuance, judgment, and safety interplay with scaling requirements.

This synergy appears across sectors — from precision tasks in automotive assemblies to logistics sites where robot teams handle heavy lifting while humans manage exceptions.

Smart Infrastructure Integration and the Digital Twin

The integration of physical AI robotic systems with smart factory architectures (e.g., digital twins, analytics platforms) enables closed-loop optimization that creates a real-time model of physical spaces and workflows.

As real-world conditions change — shifts in supply flow, labor availability, or demand spikes — these systems self-adjust, surfacing bottlenecks before they impact throughput and suggesting corrective actions automatically.

This is particularly significant in the EU and Australian manufacturing ecosystems, where regulatory frameworks encourage digital twin adoption to improve productivity and safety.

Real-World Applications of Physical AI Robotics

Manufacturing and Smart Factories

Physical AI robotics in manufacturing is transforming output with dynamic adaptation capabilities that respond to variability in materials, environment, and end-product requirements.

For instance, physical AI robots can:

- Identify and correct tolerances in real-time

- Support decentralized workflows across global facilities

- Empower flexible mass customization — critical for automotive, electronics, and aerospace industries

In Germany’s industrial heartland and major Chinese production hubs, these systems enable near-continuous production while reducing downtime and recall rates.

Logistics and Warehousing

In logistics centres, physical AI robotics is critical for handling unpredictable tasks: inventory rebalancing, exception routing, and autonomous vehicle coordination.

A notable real-world instance is the trial of delivery robot dogs in the UK capable of fulfilling food orders autonomously, showcasing how physical AI can enhance last-mile fulfilment for on-demand services.

These systems combine perception, obstacle negotiation, and route optimization — features that vastly expand the role of robotics beyond fixed conveyors and dock robot arms.

This transformation is not theoretical. Recent headlines such as Amazon to Cut 600,000 Jobs as AI Takes Over—Here’s How to Secure Your Future highlight the accelerating shift toward AI-driven automation in warehousing and distribution networks. As major retailers double down on robotics, machine vision, and autonomous systems, the workforce model is being fundamentally reshaped.

Healthcare and High-Precision Tasks

In high-risk environments like surgery or patient handling, physical AI robotics is emerging as a reliable partner in repetitive and accuracy-critical tasks.

Robots equipped with intelligent control systems can autonomously navigate operating suites or assistive environments — improving outcomes while reducing caregiver strain.

Regulatory, Ethical, and Safety Considerations

As physical AI robotics proliferates, governments and standards bodies across the US, EU, China, and Canada are crafting frameworks to ensure safe, ethical, and accountable deployment. Key focus areas include:

- AI safety protocols and explainability

- Human-robot interaction standards

- Workforce transitions and reskilling mandates

- Cybersecurity resilience of autonomous systems

For instance, the EU’s AI Act is shaping how physical AI systems are certified and audited before field deployment — ensuring that robots handling complex tasks meet predictable safety thresholds.

Looking Ahead Beyond 2026

Physical AI robotics will not just transform industrial automation — it will redefine the interplay between software intelligence and embodied action across every economic sector. Analysts project this shift as part of a long-term evolution where robotic workforce agents will complement human teams, accelerate innovation cycles, and enable new business models that were previously too costly or risky to pursue.

The next decade promises a societal and economic transformation where embodied intelligence is ubiquitous — from smart infrastructure to adaptive workplace platforms, shaping what it means to be productive, safe, and innovative.

FAQs

Q1: What is the difference between traditional robotics and physical AI robotics?

Traditional robotics executes preset tasks with limited adaptability, whereas physical AI robotics uses real-time perception and reasoning to interact with changing environments — enabling autonomous decision-making and fluid interactions.

Q2: How is physical AI used in manufacturing?

In manufacturing, physical AI enables robots to perceive variability on assembly lines, self-optimize production paths, and handle unpredictable materials without extensive reprogramming — improving productivity and reducing waste.

Q3: Are humanoid robots part of physical AI?

Yes. Humanoid robots represent an advanced use case of physical AI — integrating perception, motion, and adaptive reasoning to perform tasks traditionally done by humans in dynamic settings.

Q4: Will physical AI robotics take human jobs?

Rather than outright displacement, physical AI robotics is poised to augment human roles, particularly in complex, repetitive, or dangerous tasks — while creating demand for new skills in robotics management and workflow design.

Q5: What industries are leading in physical AI robotics adoption?

Manufacturing, logistics, healthcare, and large-scale distribution centres in the US, EU, China, Germany, Canada, and Australia are at the forefront, driven by skilled labour gaps and digital transformation priorities.

For more news, and insights on emerging technologies, including AI, robotics, cybersecurity, blockchain, gaming and the evolving gig economy, visit the home page of The Gignomist.